Let’s just say hypothetically this was possible and that the laws of silicon were not a thing, and that there was market demand for it for some asinine reason. As well as every OS process scheduler was made to optionally work with this. How would this work?

Personally, I’d say there would be a smol lower clocked CPU on the board that would kick on and take over if you were to pull the big boy CPU out and swap it while the system is running.

Linux already has support for this, but it still has multiple limitations such as requiring multiple CPUs or including a hard limit for how many CPUs can be installed.

Assuming a consumer use case (single CPU socket, no real-time requirements) the easiest approach would be including an additional soft-off power state (S2/S3, but also setting the CPU into G3 and isolating things such as RAM), a way to prevent wake-up while the CPU is not connected, and a restart vector that lets the OS tell applications a CPU has been changed to let them safely exit code dependent on feature flags that may not be present. TPMs stay on the removed CPU, so anything relying on their PCRs gets hosed.

The Linux kernel once again amazes me. It seems to have absolutely everything. I tried looking to see videos of this in action from a hands-on perspective to see how this would work, but I get nothing but LTT clickbait garbage.

Seems like one of those neat features that in reality would see little to no use. Without a rework of cpu cooling systems and installation structures, a “hot swap” of the cpu would take minutes to complete at the fastest, and realistically, there are few circumstances that would benefit from a hot swap. The only realistic scenario would be prosumer dual+ cpu boards that can shift the load, yet are still trying to maintain 100% online time but still cannot afford to just shift it to a second server temporarily.

Too stiff and unlikely to be used by the entry user, and not worth the risk for corporate entities that can afford to just have more servers with buffer to offline one for maintenance.

As for my thoughts on how it’d work, perhaps freezing the entire system somehow, and then dumping all buffers to RAM, then like RA2 said, slowly feeling out what you’ve got, and waking things up one at a time as the RAM buffer is loaded back in. I can only guess at the landmines you’d run into trying this in a live environment, with any slight deviation from what a process expected immediately hanging that process, if not the whole system. I’d guess the new CPU would need as much or more cache space, although I’m already reaching my computer infrastructure knowledge on the subject.

Yeah, I definitely figured this would have very little use. I’d imagine in such a hypothetical scenario, the CPU cooler would have some sort of mechanism where it’d hold onto the CPU as you’re pulling it off of there and it’d be slotted to where you’d have to pull straight up with no deviation in movement, and there’d be some sort of handle on top of the CPU cooler. Fun thought exercise, regardless.

Computer engineer here. I think physically swappable CPUs could be physically doable. You would have something like an SD card slot and just be able to click it in and out.

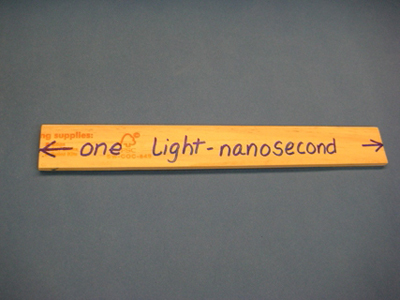

The main problem is the speed of electricity. Electricity moves about as fast as light does, but there’s a problem. Meet the light nanosecond. The distance light travels in a nanosecond:

It’s 6mm less than a foot. If you have a processor running at 4GHz, the pulse of the clock is going to be low for an 8th of a foot and high for an 8th of a foot. You run into issues where the clock signal is low on one side of the chip and high on the other side of the chip if the chip is too big. And that’s saying nothing of signals that need to get from one side of the chip and back again before the clock cycle is up.

When you increase the distance between the CPU and the things it is communicating with, you run into problems where maybe the whole wire isn’t at the same voltage. This can be solved by running more wires in parallel, but then you get crosstalk problems.

Anyway, I’m rambling. Main problem with multiple CPUs not on the same chip: by far the fastest thing in a computer is the GPU and the CPU. Everything else is super slow and the CPU/GPU has to wait for it. having multiple CPUs try to talk to the same RAM/Harddrive would result in a lot of waiting for their turn.

It’s cheaper and a better design to put 24 cores in one CPU rather than have 12 cores in two CPUs.

Most things are still programmed like they are single thread and most things we want computers to do are sequential and not very multi-threadable.

Very interesting take for what I’d consider to be a semi-shitpost. Yeah, more programs are multithreaded compared to years back, but thread safety poses a big challenge still since you don’t want functions executing in parallel and one part gets done sooner than the other, causing a plethora of race conditions.

For multi CPU systems, there’s NUMA which tries to take advantage of the memory closest to the processor first before going all the way out and fetching the data from a different set of memory all the way across a motherboard. That’s why server boards have each set of DIMMs close to each processor. Though this is coming from a hardware layman, so I’m pretty sure I’m not being entirely accurate here. Low level stuff makes my brain hurt sometimes.

One of my systems has 2 CPUs if you ever want me to run benchmarks c:

I played around with a dual CPU system. But it just used too much power and it was way too over powered for my needs. Don’t remember if I ran a benchmark on it though…

I see. I only have mine because more CPUs==more PCIe slots.

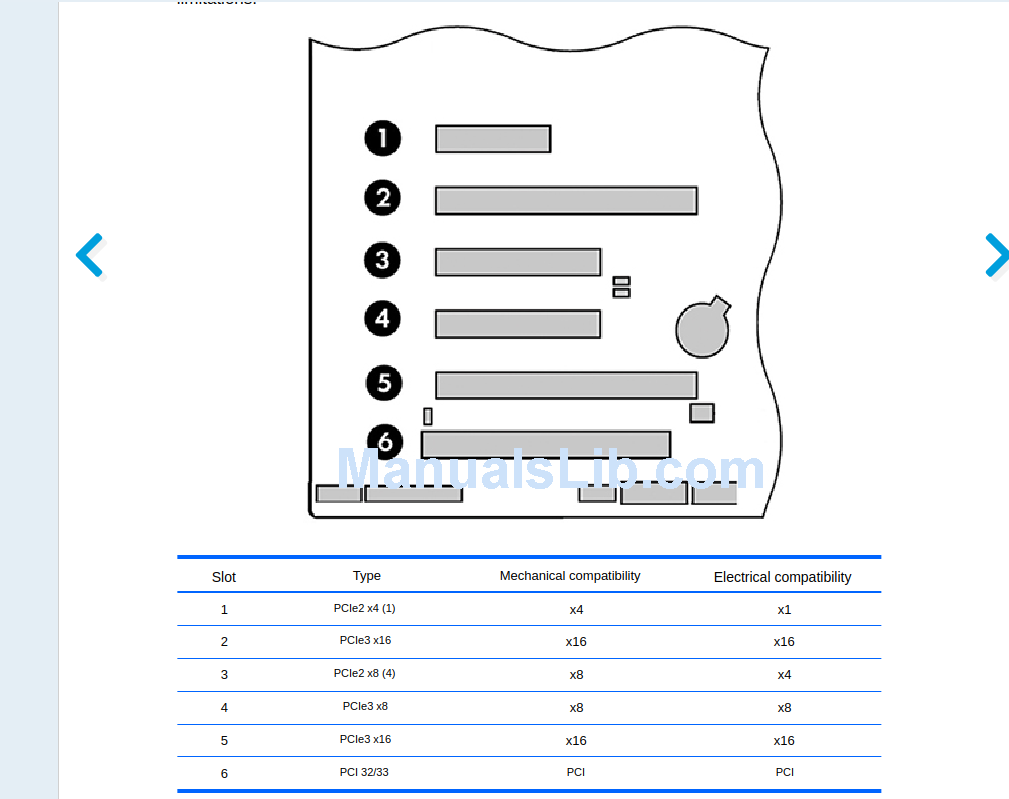

Oooh. How many PCIe slots do you get and what kind?

With the motherboard I am using (Supermicro X9DRH-7F) I get 6 8x PCIe slots, and 1 16x PCIe slot for a total of 7 slots. All of these are communicating directly with the CPUs, as opposed to some boards where the slots go through the chipset. There are motherboards with even more, but they are more expensive. I got this because I will need the bandwidth for model parallelism using multiple GPUs.

Also, the least power-hungry CPUs you can get for this board are the Xeon E5-2630L, which each consume 60W under full load.

I definitely like the idea of a micro-integrated CPU which would be just enough to keep the machine on. Maybe in a sleep like state where all the components are technically still running, but not enough to actually be put under any real load.